Title: Embodied remembering in coordinated performances

Abstract: Drawing on multimodal conversation analysis and past literature on synchronization, this study sheds light on the temporal properties of embodied remembering, which we define as co-operative enactment(s) of a mutually-established recollectable. Our main argument is that the nature of a recollectable shapes the practical organization of embodied remembering. To demonstrate this, we investigate the phenomenon in three performance-based settings: (a) taiko ensemble rehearsal, (b) Korean TV show, and (c) ESL service-learning reflection. In each setting, participants jointly produce a (quasi-)synchronized performance, but for different purposes: to advocate one version of choral chanting against the other, to demonstrate one’s knowledge of choreographic moves and understanding of an expert correction in the pursuit of humor, and finally, to foster peer solidarity through nonserious competition. Detailed analysis uncovers varying degrees of performative precision, through which participants display their in-situ understanding of the consequentiality of achieved synchrony for the task-at-hand. The temporal unfolding of embodied remembering is locally shaped by participants’ mutual orientation to a given activity context and the nature of a recollectable. Participants’ orientation to relevant performative precision is embodied in the very way they enact the recollectable.

Choe, A. T., & Yagi, J. (2023). Embodied remembering in coordinated performances. Multimodal Communication, 12(2), 99–122. https://doi.org/10.1515/mc-2022-0029

————————————————————————————————-

Title: Developer involvement and COI disclosure in high-stakes English proficiency test validation research: A systematic review

Abstract: Language test developers play a critical role in gathering evidence to support test score interpretations and real-world uses, but independent research is necessary to put claims of validity to the test (Kane, 2013). This study systematically reviews the prevalence of developer involvement in high-stakes English proficiency test research published in five peer-reviewed language testing journals from 2016 to 2021, focusing on aspects of validity addressed, research methodology, and disclosure of relevant conflicts of interest (COI). Nearly half of the 181 included studies featured some degree of developer involvement, in terms of authorship or funding, and developer-involved studies had some notable methodological advantages, including larger sample sizes and access to official test materials/data. COI reporting was generally poor, with only 4-7% of studies with potential conflicts making adequate disclosures. We discuss implications of these findings in terms of evaluating tests and make recommendations for greater transparency in COI reporting and validation research.

Isbell, D. R., & Kim, J. (2023). Developer involvement and COI disclosure in high-stakes English proficiency test validation research: A systematic review. Research Methods in Applied Linguistics, 2(3), 100060–. https://doi.org/10.1016/j.rmal.2023.100060

————————————————————————————————-

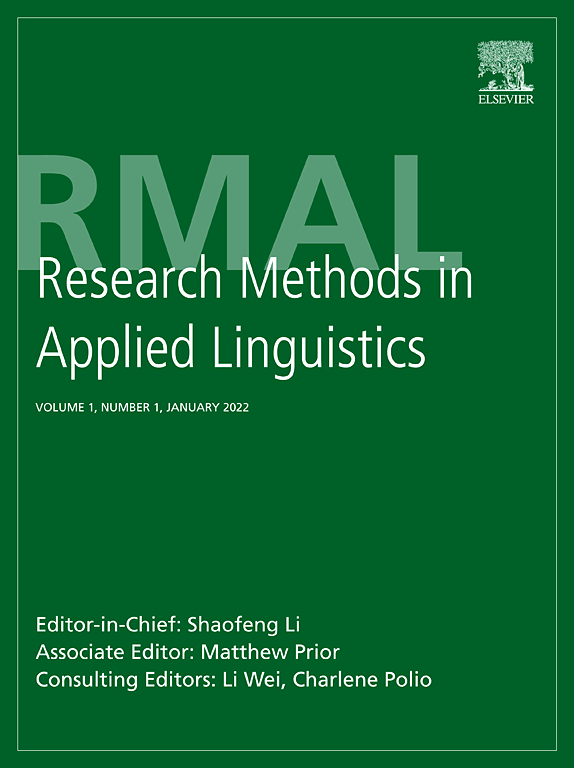

Title: Speaking performances, stakeholder perceptions, and test scores: Extrapolating from the Duolingo English test to the university

Abstract: The extrapolation of test scores to a target domain—that is, association between test performances and relevant real-world outcomes—is critical to valid score interpretation and use. This study examined the relationship between Duolingo English Test (DET) speaking scores and university stakeholders’ evaluation of DET speaking performances. A total of 190 university stakeholders (45 faculty members, 39 administrative staff, 53 graduate students, 53 undergraduate students) evaluated the comprehensibility (ease of understanding) and academic acceptability of 100 DET test-takers’ speaking performances. Academic acceptability was judged based on speakers’ suitability for communicative roles in the university context including undergraduate study, group work in courses, graduate study, and teaching. Analyses indicated a large correlation between aggregate measures of comprehensibility and acceptability (r = .98). Acceptability ratings varied according to role: acceptability for teaching was held to a notably higher standard than acceptability for undergraduate study. Stakeholder groups also differed in their ratings, with faculty tending to be more lenient in their ratings of comprehensibility and acceptability than undergraduate students and staff. Finally, both comprehensibility and acceptability measures correlated strongly with speakers’ official DET scores and subscores (r ⩾ .74–.89), providing some support for the extrapolation of DET scores to academic contexts.

Isbell, D., Crowther, D., & Nishizawa, H. (online first). Speaking Performances, Stakeholder Perceptions, and Test Scores: Extrapolating from the Duolingo English Test to the University. Language Testing. https://doi.org/10.1177/02655322231165984